Jonathan Mugan, a computer science researcher specializing in Machine Learning and AI, gave a presentation titled “What Deep Learning Means for Artificial Intelligence”.

AI through the lens of System 1 and System 2

Psychologist Daniel Kahneman in Thinking Fast and Slow describes humans as having two modes of thought: System 1 and System 2.

System 1: Fast and Parallel

Subconscious: E.g., face recognition or speech understanding.

We underestimated how hard it would be to implement. E.g., we thought computer vision would be easy.

AI systems in these domains have been lacking.

- Serial computers too slow

- Lack of training data

- Didn’t have the right algorithms

System 2: Slow and Serial

Conscious: E.g., when listening to a conversation or making PowerPoint slides.

We assumed it was the most difficult.

E.g., we thought chess was hard.

AI systems in these domains are useful but limited. Called GOFAI (Good, Old-Fashioned Artificial Intelligence).

- Search and Planning

- Logic

- Rule-based systems

Deep learning begins with a little function

It all starts with a humble linear function called a perceptron.

The little functions are chained together

Deep learning comes from chaining a bunch of these little functions together. Chained together, they are called neurons.

To create a neuron, we add nonlinearity to the perceptron to get extra representational power when we chain them together.

In his excellent book Thinking, Fast and Slow, Daniel Kahneman describes humans as having two thought systems, System 1 and System 2. Conscious thought is performed by System 2, but it is only a fraction of our cognition. Most of our thinking is done below conscious awareness by System 1. This subconscious thought encompasses our fast gut reactions and our ability to recognize patterns. The combination of System 1 and System 2 endows us with an intelligence that is broad and flexible, and researchers in artificial intelligence have been trying for decades to build computers that match this capability. Current computers do a decent job at logical reasoning as embodied by System 2, but they have been hampered by a lack of a robust System 1.

Consider how toddlers can recognize abstractly drawn animals in children’s books. A cow in one of these books doesn’t look like a real cow at all, yet children can pick up on one or two “cow features” and identify the animal as such. Our computers can make logical deductions, and can even play chess better than any human, but their lack of a powerful System 1 prevents them from achieving the supple intelligence necessary to reliably recognize cows or to follow conversations that even a child could understand.

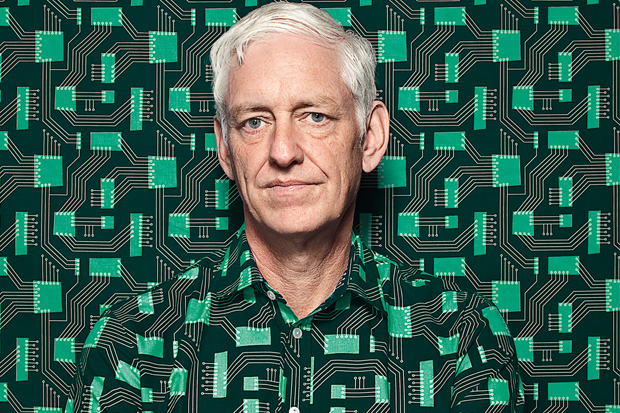

This is beginning to change. A more powerful System 1 for our computers is emerging from a machine learning method called deep learning. Deep learning systems are artificial neural networks with many layers. Neural networks are rough mathematical approximations of how the human brain processes information. They have been around since the 1940s, but it has been only in the last eight years that Geoffrey Hinton and others have figured out how to train networks with lots of layers. A large number of layers is what allows the networks to represent the complicated features necessary for System 1 thinking. These layers are analogous to the human visual system where objects are represented as compositions of individual features.