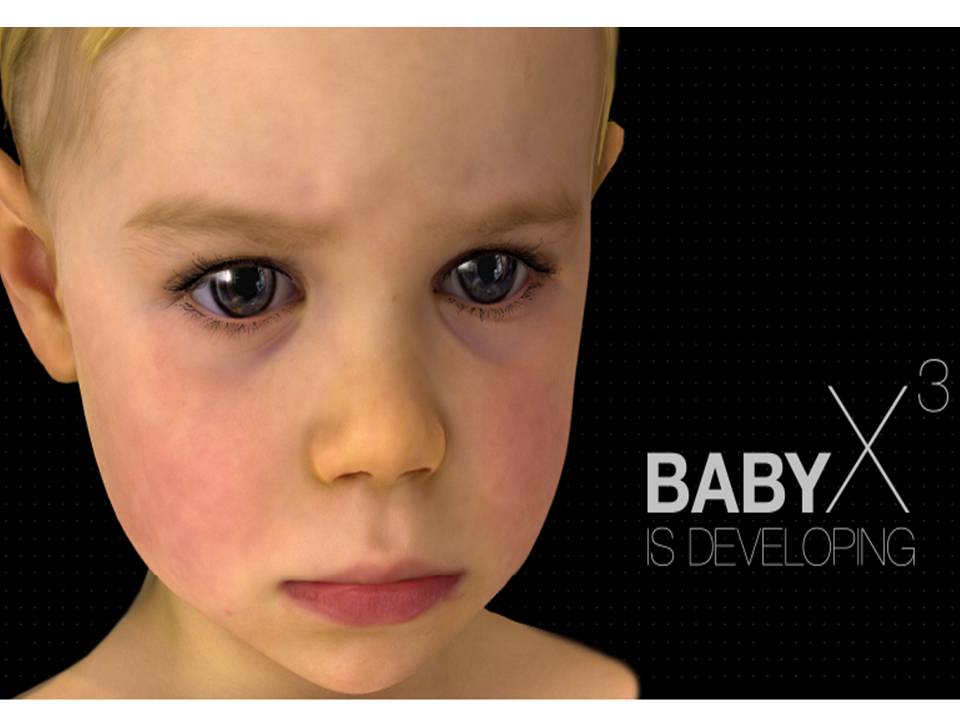

Can you visualize a machine that can laugh and cry, learn and dream, and can voice its inner responses to how it perceives you to feel?

If you can’t visualize, you should see BabyX…

Auckland’s Bioengineering Institute Laboratory for Animate Technologies is setting up ‘live’ computational models of the face and brain by combining Bioengineering, Computational and Theoretical Neuroscience, Artificial Intelligence and Interactive Computer Graphics Research.

Baby X is a a virtual animated baby that learns and reacts like a human baby. It is an experimental computer generated psychobiological simulation of an infant which learns and interacts in real time. Artificial intelligence algorithms are used for BabyX’s “learning” and interpretation of the voice and image to understand the situation. So Baby X can express itself in a natural manner, can learn to read, recognize objects and understand.

Mark Sagar as part of the team at director Peter Jackson’s Weta Digital was behind the computer generated faces in films such as King Kong and Avatar. He says that “Baby X is an exploration of emotional interaction through an interactive avatar.”

BabyX integrates realistic facial simulation with computational neuroscience models of neural systems involved in interactive behaviour and learning. Mark Sagar and his team are mapping the neural circuitry beneath the wrinkled nose, the puckered mouth, the narrowed eyes, and thousands of other facial signals to make computers look and act more like human beings.

how these cells are synchronized to a common goal we can perceive?

Every single cell in our body; seems to create an ethereal electromagnetic body that serve as our storage data; we can have access to any information need it; as long as we have to right connections to grave it; humans may be can access to 2 or 3 percent of that data; what we need to discover how to get access to the other 97 per cent; and this can not be created by an external chip; or rewiring of the brain; or by any hardware; ancient scripts describe no one but two energy field; where all data is stored…..the first one is limited and personal; and the other one is non limited and universal….MARCINI….